The signals of distrust are everywhere, and not just in politics. We’ve been surrendering our data to service providers for 25 years without much thought, but it’s something we now do begrudgingly. We stopped believing in the promises of convenience, after at least three generations of machine learning and generative AI have shown us that “personalisation” mostly means “getting you targeted ads, sorry content”. GDPR and similar legislations had to remind the industry that personal data wasn’t an all-you-can-eat buffet, and that users have rights, but it’s quite clear we haven’t internalised that lesson yet, despite the costly compliance work. And with the acceleration of transformer models, bundled into every single everyday application, a general malaise is growing at the idea of platforms siphoning personal data with no clear exchange value – with more and more examples of open outrage when the platform itself is a Big Tech product.

For those of us who work in digital but never had to apologise to a US Senate subcommittee this has become a terrain to explore carefully, especially in light of two technology advancements, agentic AI and verifiable credentials. You don’t hear about the second as often as you do about the first, although there is a link between them, especially when trust comes into play.

But let’s start with a short trip back to 2019.

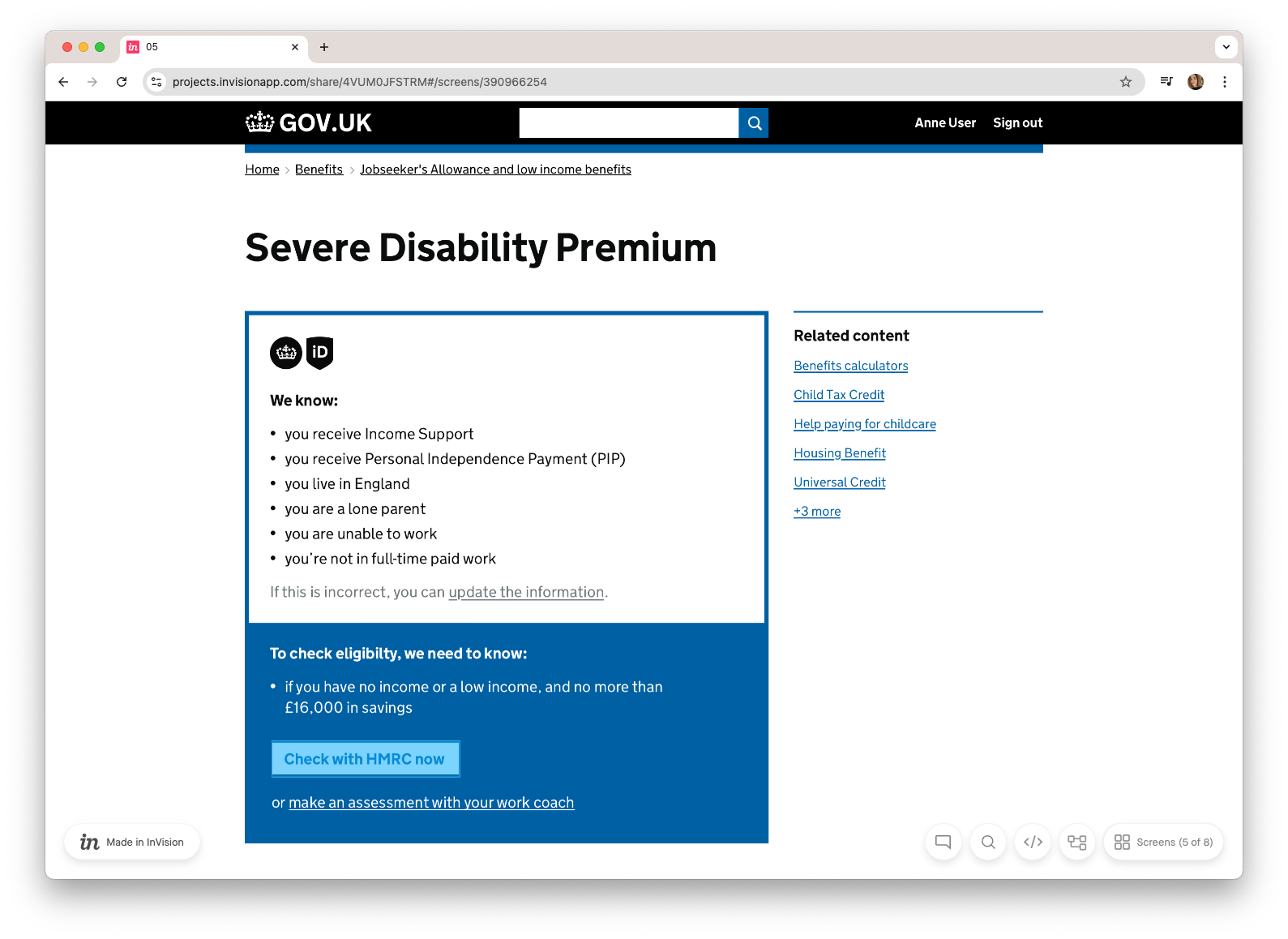

Back then I was part of a team at the Government Digital Service exploring what a personalised GOV.UK might look like with a government account. We were working with early ideas around digital wallets and verifiable credentials before the official standards were out, feeling around concepts that had no name at the time. One prototype in particular was used to put some of these concepts in a complete journey, to see what they looked like in practice. It was never implemented, and as far as I know, nothing like it currently is. But I keep returning to it, because seven years later it remains one of the few examples I’m aware of that shows tangible differences this technology can offer. The screenshots in this article come from that work.

The reason there are so few examples is that verifiable credentials are still operating at the infrastructure and standards layer. The design questions have barely been addressed: what trust looks like as an interface? How do you make a cryptographic handshake legible to someone trying to access a disability benefit? We now have better words and emerging patterns for what we were exploring in 2019. This article, and the one that follows, are an attempt to use them.

Signals, claims, and why the distinction matters

Most of what currently passes as personalisation is built on signals. A signal is data captured automatically when two systems interact: a returning device, a page visited, an intent inferred. The user does not submit it. In many cases they are not aware it is being collected. They have no meaningful control over it after the fact, as you will know as well, if you ever tried to convince Netflix that your true crime phase was a temporary byproduct of a virulent flu, rather than a lifelong passion.

Claims are different. They’re the information a user knowingly submits: a name, an address, a stated preference. The user has agency over them, at least before they hand them over. Once submitted, that agency largely disappears, and it becomes a matter of subject access requests.

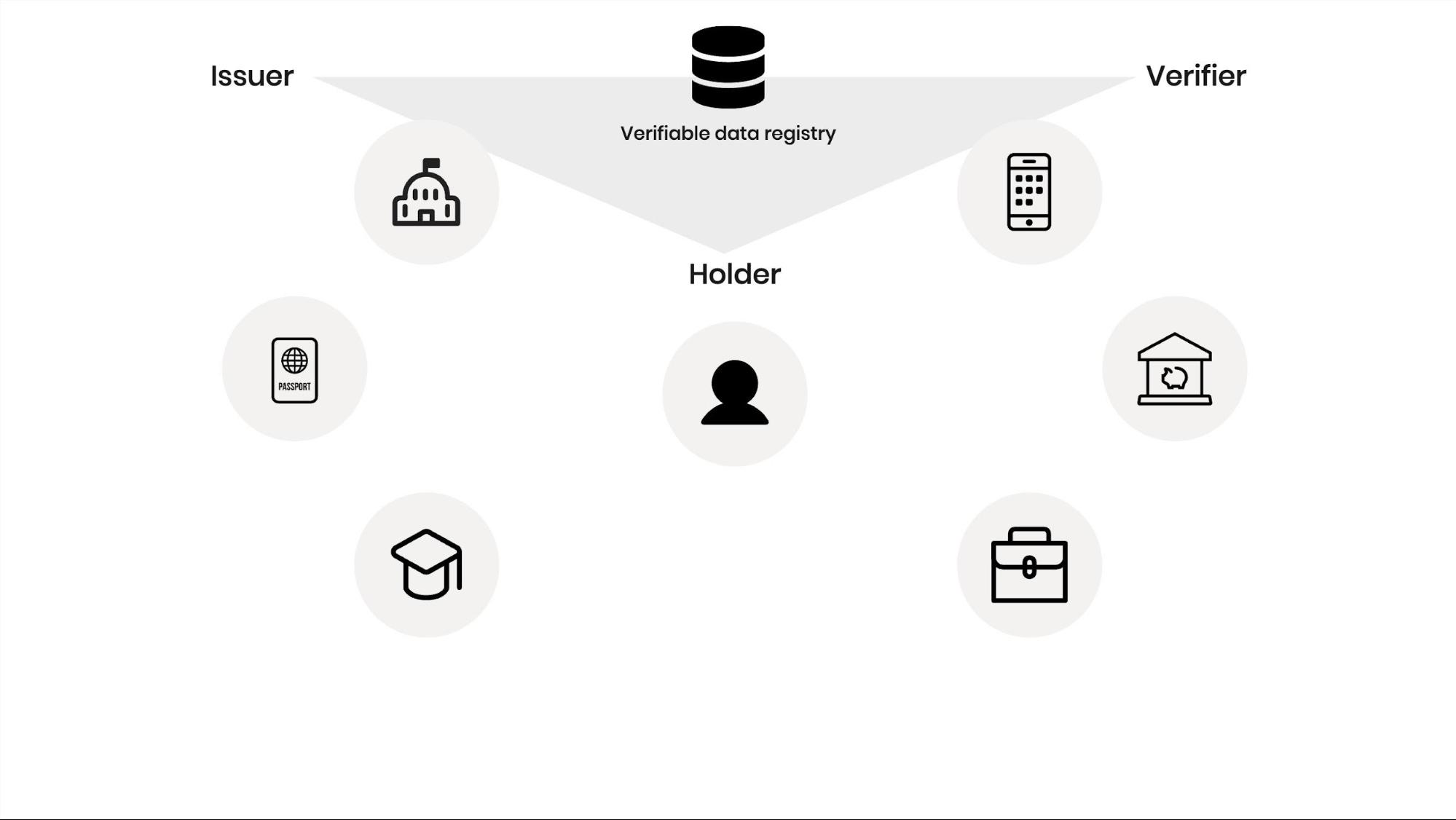

Verifiable credentials are a specific category of claim: data that has been confirmed by an authoritative source, bound by cryptography to the person it describes, and held by the user. The service requests a verification when it’s needed. The issuer confirms the data. The user brokers that sharing.

The distinction matters not just because verified and cryptographically signed data is higher quality but because of who controls it. Signals are beyond the user's reach. Claims are as well, once they're submitted - a data point that only has value within one domain. Verifiable credentials keep the user in the loop throughout, and can be reused across different providers. That is a paradigm shift.

The personalisation spectrum: data reliability and experience automation

I mentioned “personalisation” a couple of times already, but at this point it’s worth introducing a definition. That’s exactly what we had to do on GOV.UK, when we ended up spinning for a couple of weeks trying to agree on what we exactly meant by it. We realised that the plethora of personalised experiences we were considering had something in common: a level of automation in the user interactions that seemed to increase with the strength of the data points used to power them. Personalisation wasn’t an outcome, but a spectrum. I kept on reflecting on that spectrum as the years went on, and this is what it is looking like these days.

At the low end sit recommendations: the related content links, the "you might also be interested in" patterns that populate most websites. These are powered by signals and context. They require low trust in the data and produce low automation. They are also the most visible and most frequently broken form of personalisation.

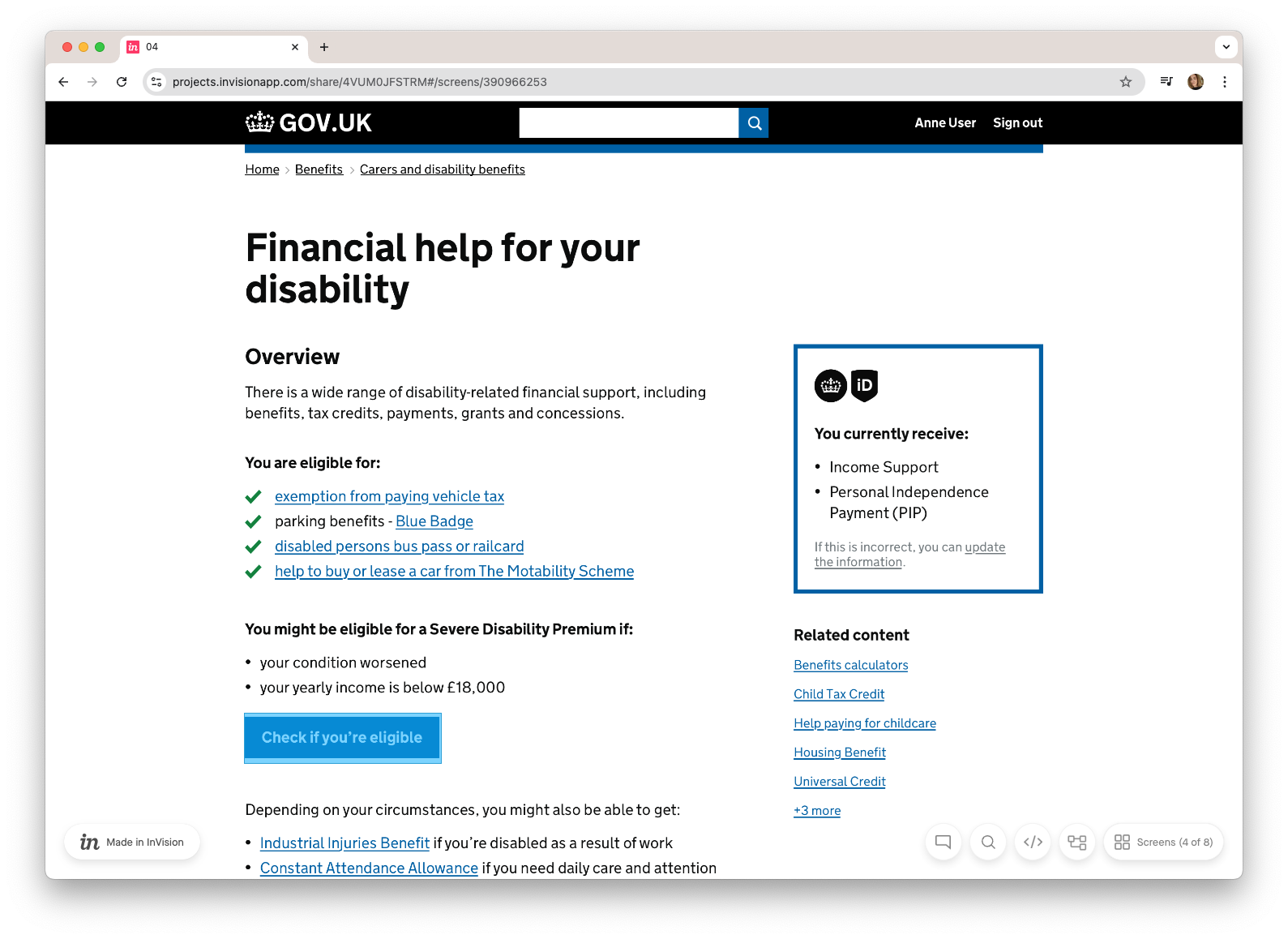

In the middle sit dynamically generated journeys: experiences assembled in real time based on what we know about a specific user, routing them to the services and information relevant to their situation rather than making them navigate a generic structure or guessing their intent. This requires higher trust in data (claims rather than signals, often backed up by a level of data assurance) and the automation dynamically creates a more contextual experience for the user to interact with. This is the tier the GOV.UK prototype was targeting.

At the high end sit automated decisions: the system determines eligibility, applies rules, and acts on the user's behalf without needing their intervention. This requires the highest level of data trust – what we now define ‘verifiable credentials’ – and the highest degree of automation.

The matrix shows clearly where verifiable credentials unlock new territory. Signals can take you to the first tier and no further. Verifiable credentials are what make the second and third tiers possible, and what can make or break their trustworthiness.

Contextual trust vs panopticon model

The first instinct when building a personalised digital service is to go for the dashboard model. A single authenticated space where users can see everything relevant to them, manage their data, and access services from a central point. Estonia did it. Many governments have been trying to replicate it ever since, usually without two decades of digital infrastructure built from the ground up with exactly this in mind.

“What about life events?” I hear you ask… well, they are tempting and all governments seem to consider them at one point, but they are just a taxonomy, and a very rigid one. They seem to fit a mythical average persona, with all the inclusion issues we will look at in the next installment.

We resisted both instincts, and I still think it was the right call. A welfare dashboard that attempts to surface all potentially relevant benefits can be overwhelming for many users, and a very significant design challenge. What’s even worse, especially in this historic context, it can be seen as proof that there is such a thing as a panopticon.

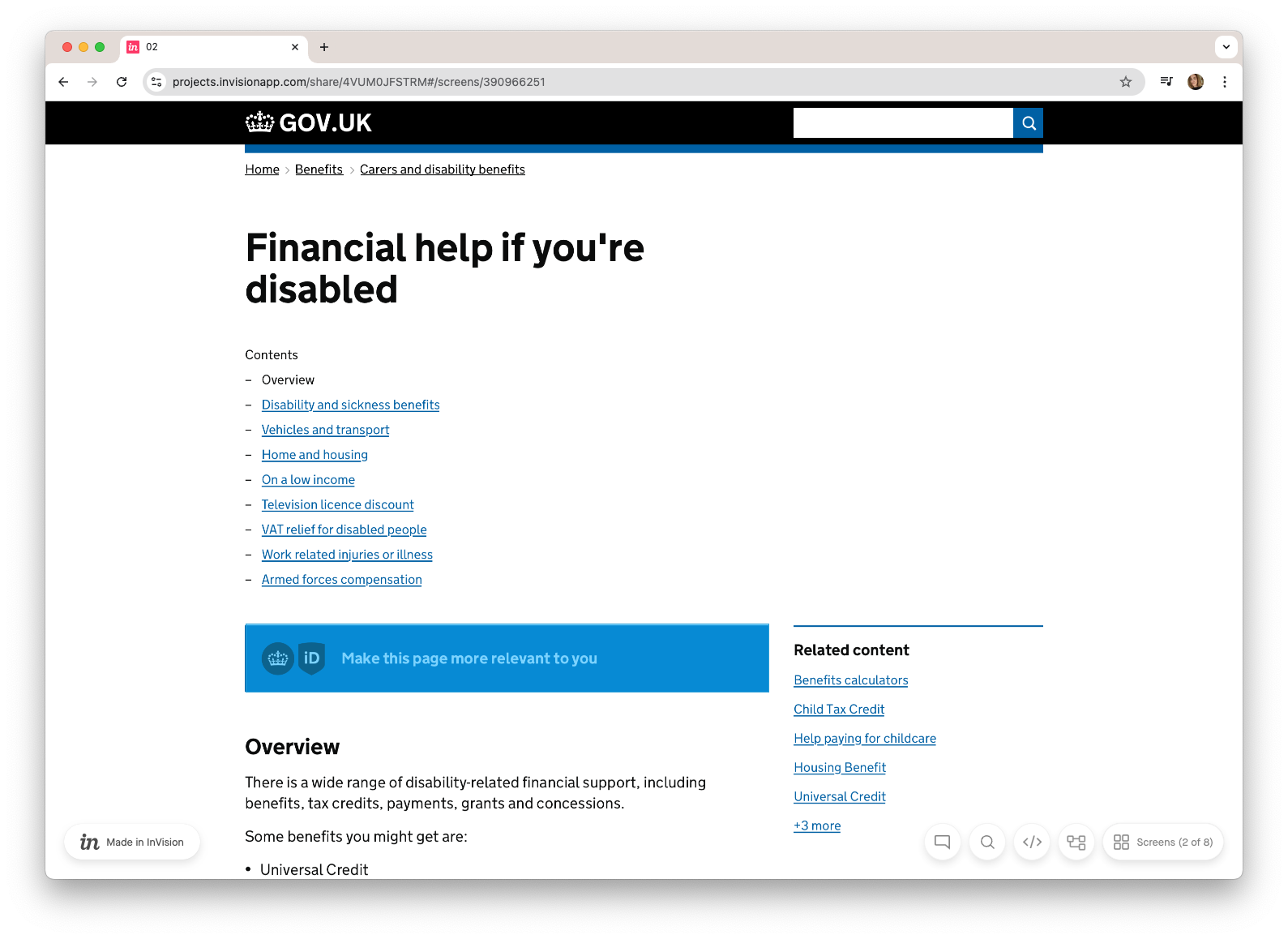

The more useful model is contextual identity verification at the point of need. A user arriving at a page about disability-related financial support is expressing a clear intent. That is the moment to offer a personalised path, scoped precisely to the category of services they are trying to access, using only the credentials relevant to that context. No persistent logged-in state; no data surrender; and no need for a dashboard.

Once the user shares their credentials, the page reloads as a dynamically assembled experience. Not a generic guidance page but a specific set of next steps built from what we know about them: what they currently receive, what they are eligible for, what they might be eligible for if one more piece of data could be confirmed.

This is the middle tier of the spectrum in practice: high trust in the data, medium automation, user in control of what they share and when.

Observable logic: the right to see the machine

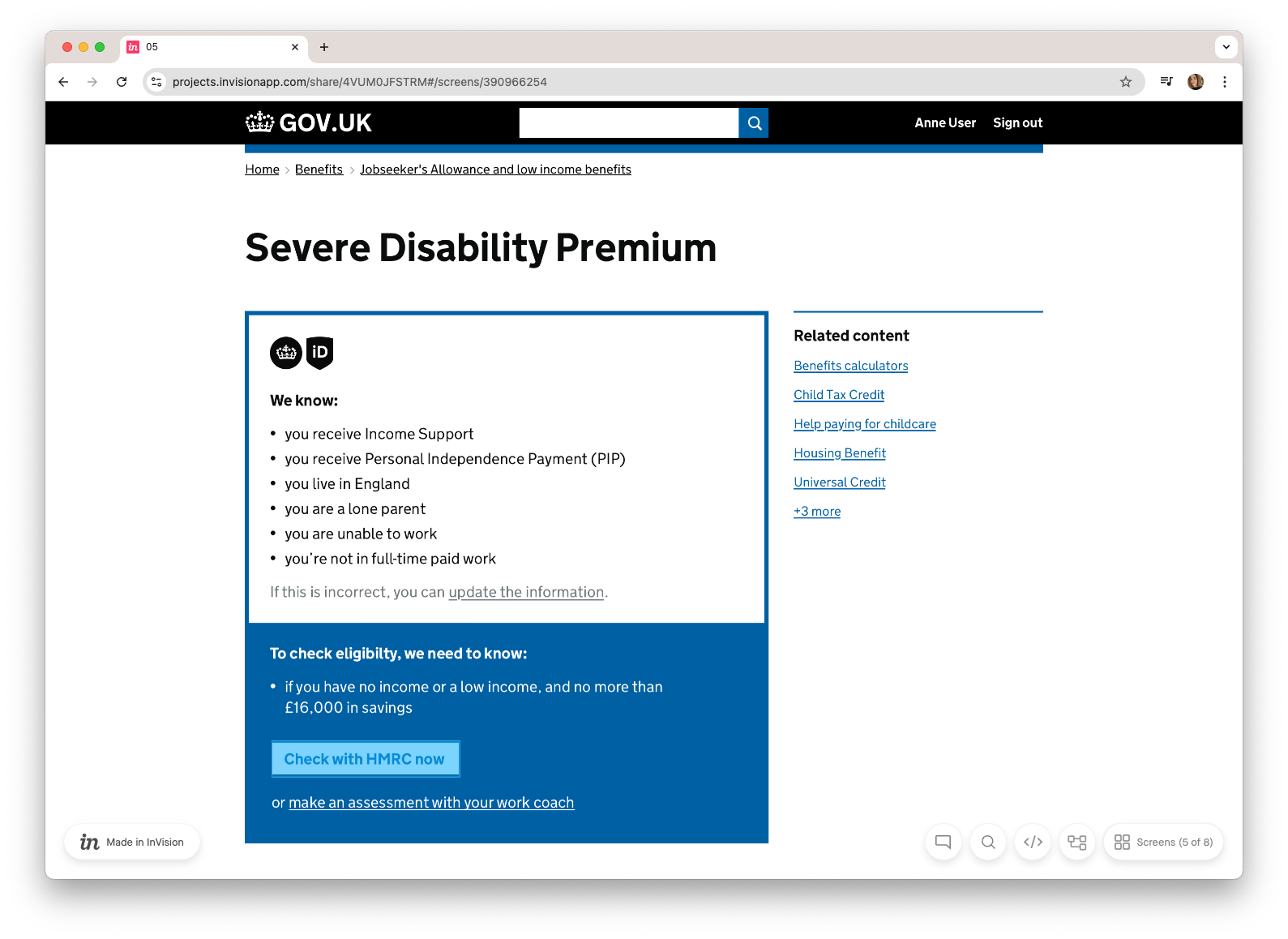

In the next step, the prototype introduced a pattern that, at the time, felt like a practical necessity. Before proceeding to an eligibility check, the system showed the user a summary of the data it was about to use, and gave them the opportunity to correct anything that was wrong.

In retrospect it is one of the most critical patterns in the whole prototype. Replaying the evidence before a decision is made is not a UX nicety. It acknowledges that the system's data may be incomplete or incorrect and it gives the user meaningful agency when they are most likely to need it.

As verifiable credentials enable increasingly automated service journeys, this kind of observable logic should be treated as a design principle. The middle and upper tiers of the automation spectrum are only trustworthy if users can see the reasoning.

It is also worth noting something that gets obscured by the current enthusiasm for generative AI: policy rules are deterministic. Eligibility criteria are human artefacts. It is perfectly possible to model a finite set of rules and relationships without statistical inference. Actually, it’s not just possible - it’s recommended, since deterministic systems can be audited. Good luck leaving it to a transformer model.

Ask for proof, not data

Bad design and marketing practices have mostly desensitised us, but we tend to collect way more data than we need. More often than not keeping requests for information minimal is an intentional design decision, and lots of design is thoughtless. Data minimisation is probably the most overlooked principle in privacy-by-design.

In many eligibility scenarios, the information required is not "what is this user's income?" but "is this user's income above/below a given threshold?" These are different questions. The first requires disclosure of a specific figure. The second requires only a statement, with no underlying personal data exchanged.

This is the principle behind zero-knowledge proofs, and it is one of the most powerful affordances verifiable credentials introduce. The verifier asks whether a condition is met. The issuer confirms it is or isn't. The underlying data never leaves the issuer. The user's exposure is minimal.

Designing to know less is one of the clearest signals a service can send that it is built around the user.

The thinning of user accounts

The GOV.UK prototype was still operating on the assumption that personalisation requires a persistent logged-in state, maintained through an account that holds data about them. We never really explored what the “account” should look like – only the benefits that it could provide.

That assumption deserves a bit of scrutiny now. If users hold their own verified data and present it at the point of need, the service's reason for maintaining an account changes fundamentally. You no longer need the account to store what you know about the user. The data lives with them. What remains in the account is much narrower, like explicit preferences, or transaction history, but not a data repository.

This has significant implications for organisations that have built their personalisation strategy around account data and customer data platforms. The question worth asking now, before the infrastructure is in place and the habits are formed, is what those accounts are actually for. What value does the service derive from holding a persistent relationship with the user, and what value does the user get in return? In a world where the user is the custodian of their own verified attributes, the answer to both questions may be: not a lot.

It also raises a more interesting question: what if users could make their data available on their own terms, and benefit from it directly? There is a gap in the market that’s slowly being explored, for instance user-controlled ad-tech: a different kind of account, owned and controlled by the user, not the platform, could be the infrastructure through which people manage and selectively share their own preferences.

All these questions will need to be answered with a broader view on policy, governance and the future “product boundaries” of digital ID services: take the recent million-euro fine that Spain's data protection authority levied against a UK digital identity provider for GDPR violations and unlawful processing of biometric data. The company is appealing, and legislation will evolve. But the case points to something the VC ecosystem has not yet resolved: the trust relationship between users and ID providers making their credentials reusable isn’t a given, and ID providers will have to show how they can balance their own business drivers with users’ rights.

What comes next

This article has covered what verifiable credentials make possible. The second one addresses the harder questions, the ones that require not just good design, but also decisions that haven't been made yet. How do we design for the people the system won't naturally reach? What does delegation look like when it is built properly? And what happens to the concept of user control when an agent is the one presenting the credentials?

A note on the work

The 2019 GOV.UK personalisation prototype discussed throughout these articles was the work of a small team who spent time and energy exploring a design territory that had no map. I am very grateful for what I learned working alongside them, in particular Steve Messer, Conor Delahunty, Will Harmer, Lisa Koeman and Erin Raj-Staniland. I also have to thank the innumerable conversations I had on the subject since, both in GDS, in the early days of One Login, and outside of it, when it became clear that while I had left the job, the job had not left me.

One last caveat: the prototype presented here is one of the many designed back then (Steve’s article lists some other patterns we looked at). It was designed to explain concepts and enable conversations, and this is why I feel it’s still useful today, but doesn’t represent any official policy or planned work, and it’s full of holes and errors – on purpose.