In the first post I covered what verifiable credentials make possible: identity assurance at the point of need, making logic observable, data minimisation, and a possible shift in the role of user accounts. We have design patterns to cover these things, or at least patterns of interactions that aren’t completely foreign.

This second instalment is about concepts that are still emerging. Verifiable credentials are moving fast at the infrastructure and standards layer, while governance and design reflections are slowly catching up – we know what happens when technology, policy and design work in isolation. With no existing large-scale mental model for users, user-centred solutions developed with a multi-disciplinary approach must lead the way, because this gap is where trust could get lost.

Make edge cases the default

Estonia is the benchmark for digital government, and that’s hardly surprising. Its digital infrastructure is sophisticated and has achieved coverage of around 98% of the population. That remaining 2% represents approximately 40,000 people who cannot access online services, a figure that might appear negligible, but we’re still talking about thousands of people excluded from a channel that’s been established as the default way of interacting with government.

In other countries, and especially in the UK, the equivalent number would be dramatically higher, and impossible to ignore. Recent estimates suggest that up to three million people lack any form of photo ID, and the barriers to digital access like connectivity, literacy and accessibility are considerably more widespread.

The risk with any identity infrastructure is that it replicates the exclusion patterns of the systems that preceded it. Verifiable credentials will be no different if they are not designed with deliberate attention to the people they will not naturally reach. But the cruel irony is that the people least able to navigate a digital wallet are often the ones with the most to gain from the services it could unlock, and the most to lose if it doesn't work for them.

Inclusion starts with acknowledging that not everything can be solved by digital alone.

Forcing real omnichannel

Omnichannel and personalisation have something in common: both are words that have lost most of their meaning. In most product and marketing contexts, omnichannel is intended as offering an app, a website, and perhaps a chatbot. That is actually multichannel. Omnichannel is a way more complex set up, when a user is able to move between channels without losing continuity, without having to repeat themselves, and without the system treating each touchpoint as a separate and unconnected interaction.

In the context of verifiable credentials, true omnichannel means that a person who cannot complete a journey digitally is given the choice to complete it through a physical channel instead. This would enable people who lack a device, connectivity, the ability to navigate the interface – or simply the documents required in the digital route – to access an intermediary to verify the data they need. This is not a new scenario, it happens daily in 600 Jobcentres across the UK: an identity infrastructure that doesn’t take a scenario like this into consideration will fail to cover a critical channel for identity verification, while possibly creating loads of rework and inefficiencies for users and caseworkers alike.

An architecture that allows the creation of valid and reusable checks at the end of a face-to-face journey, and an alternative way to store and share these checks, will ensure that verifiable credentials can make a real difference when it comes to digital exclusion.

Vouching: making the standard a reality

A vouch is a declaration by someone who knows a person: a community leader, a caseworker, a teacher, a professional with recognised standing, or simply someone who can confirm that this person is who they claim to be. The principle is straightforward, as everyone knows someone who can vouch for them. Vouching is particularly relevant for people who are repeatedly asked to provide evidence they do not have: no passport, no driving licence, no footprint in public or private sector records. Millions of people in the UK are in that position, and hundreds of millions worldwide are equally excluded according to World Bank estimates.

Vouching is not a novel idea in the UK: it’s been part of GPG45 (the government’s official guidance on identity assurance) as a standardised evidence type for a few years now, with its own dedicated guidance on how to create and accept a vouch as proof for identity. It’s also part of the UK digital verification services (DVS) trust framework, which defines the rules for how digital credentials will be created and certified across the private and public sector. But despite the standards and the guidance, and the large portion of the population who will benefit from it, it’s hardly a feature in accessing services. And as far as I'm aware, only one certified identity provider in the UK currently offers it as a way to prove someone's identity.

But if we truly believe that inclusion matters, vouching needs to be not only implemented as an alternative route for people who can’t complete the “happy path” journey, it needs to be a primary pathway, and it needs to extend to a broader set of credentials. Identity assurance has to work for everyone, unless we want to make the current digital divide a chasm impossible to fill. According to the latest reports from the World Bank ID4D project, 2.8 billion people still lack access to digital ID – and therefore to all the digital services that require it, especially e-government.

In that sense, the successful adoption of vouching is not just a matter of digital design, but also a critical policy point. Under GPG45, vouching can only provide medium confidence, this means that it can’t be used for checks carrying higher risk. As digital services increase their footprint, service providers need to make sure that the level of identity assurance they require is calibrated to genuine risk, or accessibility issues will start extending far beyond physical barriers.

Show the seams: making the ecosystem legible

We already mentioned the lack of an existing mental model that users can instinctively relate to when using verifiable credentials. We are talking about a complex ecosystem that involves at least three distinct actors: the holder, who owns and presents the credential; the verifier, who requests it; and the issuer, who originally confirmed the data. In practice a single service interaction may involve multiple verifiers and issuers, each playing a different role at a different point in the journey. Behind all of this sits a data registry that validates the relationships between them.

This is uncharted territory for design. The web as we currently experience it operates as a bilateral relationship: we interact with a service, the service does things with our data. In the few occasions where a third party is required, like confirming a payment on the mobile app, journeys tend to break. This was one of the biggest issues faced by the previous digital ID scheme in the UK (Verify) – users were befuddled by finding themselves on a different domain with a different visual identity. Currently pilots in the digital wallets space are exploring simple interactions, like an airline ticket booking requiring ID verification. Not all transactions are linear like this one.

We might be tempted to obscure these data handoffs in the name of simplicity, usability or smaller cognitive load, but we’ll have to resist that temptation, because trust will depend also on users understanding what’s happening.

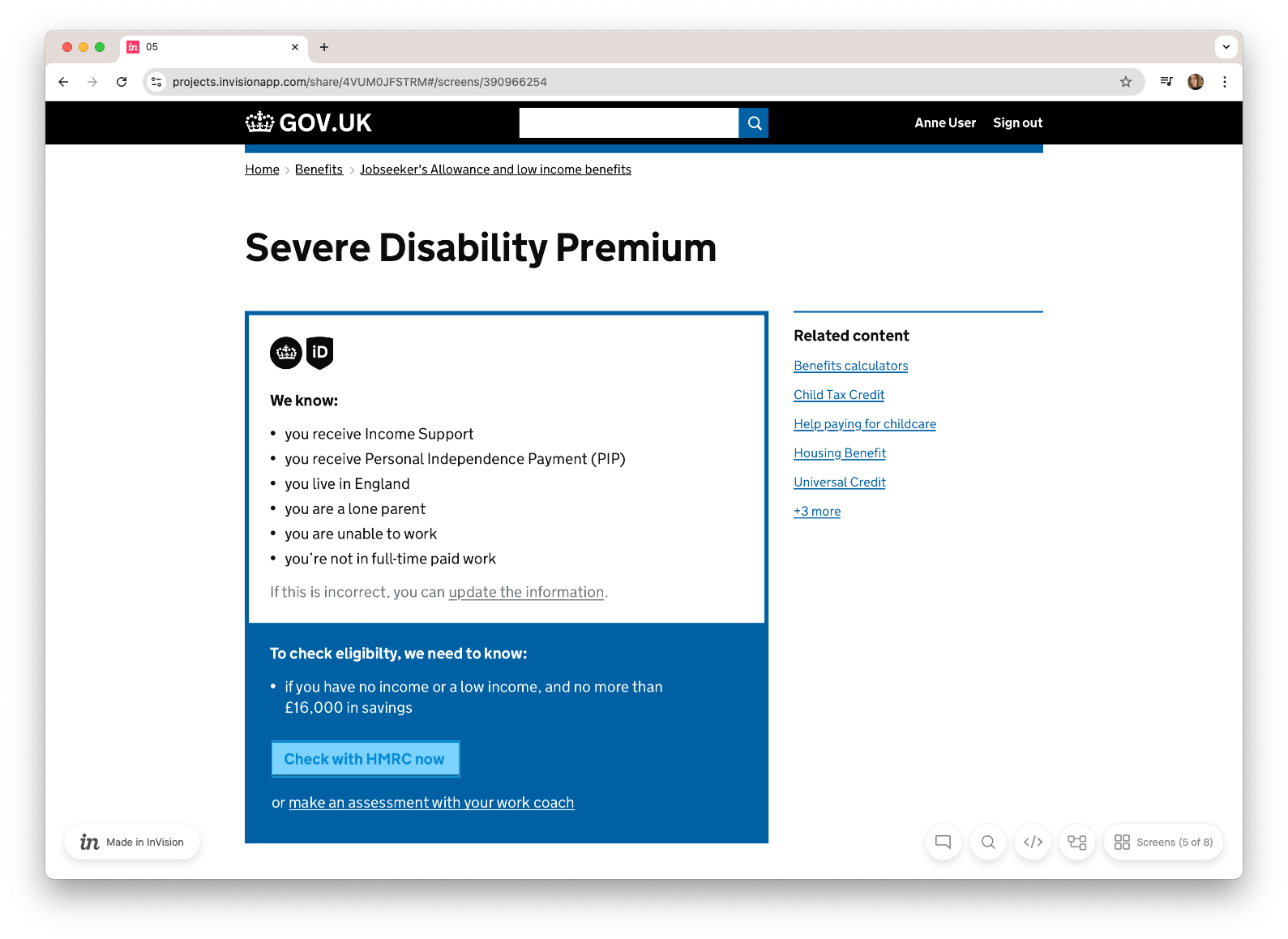

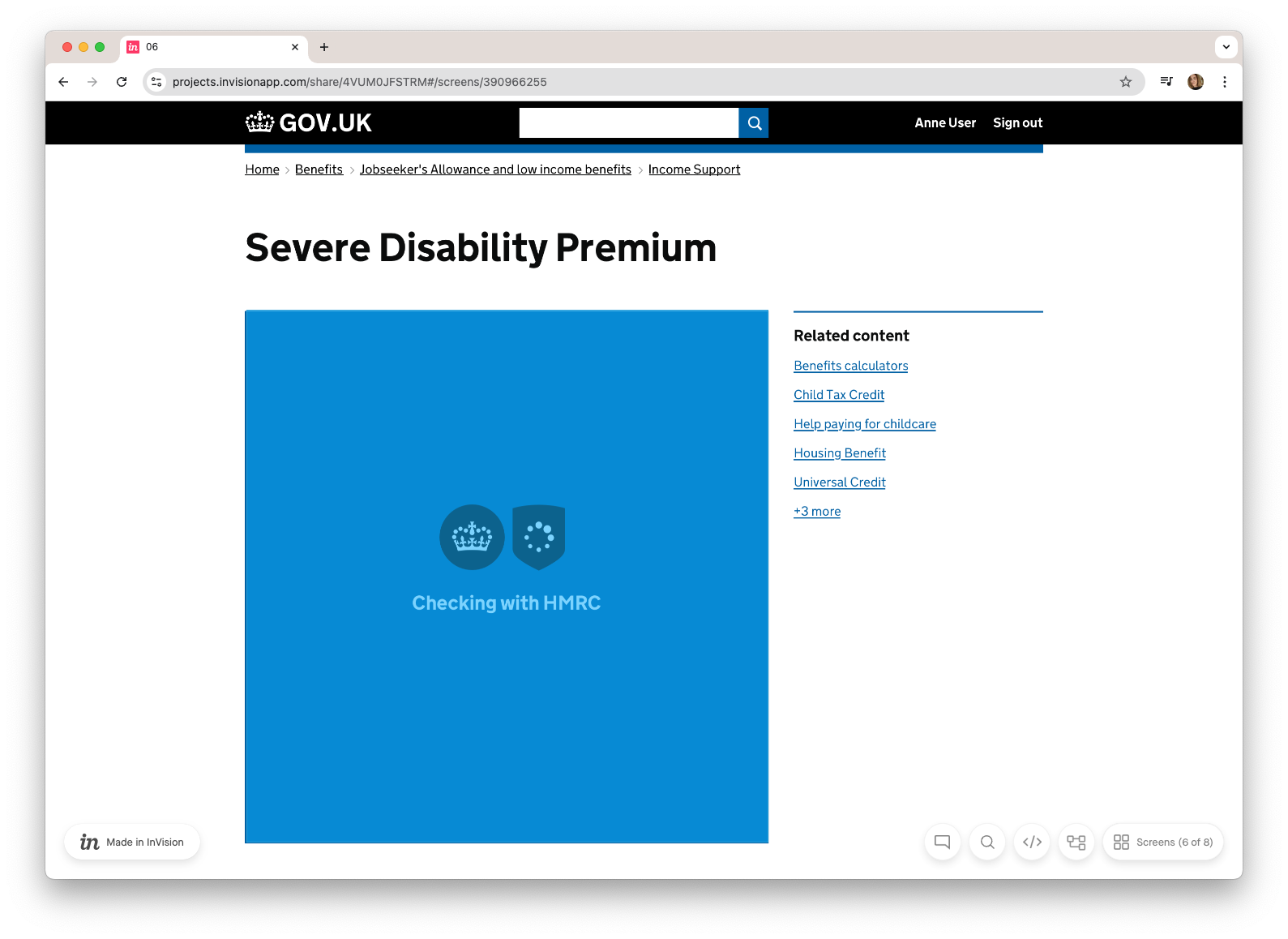

Our prototyping work on personalisation only hinted at the issue, by introducing a progress screen with a loading state announcing a check was performed with HMRC: a basic interaction made legible, with named participants and a visible chain of events.

The more automated the experience, the more important this kind of transparency becomes. Hiding the seams might seem like the right choice in the name of convenience, but in the context of user agency and rights, it can contribute to eroding trust.

Delegation: the missing pattern

In UK law someone acting on someone else’s behalf, outside of explicitly legislated areas like power of attorney, is largely covered by “informal delegation”: there is a broad spectrum of actions and transactions that third parties can take on our behalf for every day admin, where delegation can be largely inferred (paying bills for someone else) or can be informally given (financial transactions and acting with some organisation). UK law leaves this area flexible on purpose. However delegation does compound a series of non-explicit agreements that are much harder to represent in a digital transaction. First we have a principal who gives an agent the authority to act on their behalf with a third party. The agent promises to stand in for the principal and act on their behalf with their interest in mind, and at the same time they make themselves liable with the third party, should any issue arise.

Now: translate this complexity into a digital interaction, and you will see why delegation hasn’t been fully tackled in digital transactions so far. Can we kick this can further down the road? My guess is we can’t, especially as verifiable credentials are abstracting risk into a separate layer. The W3C specifications have also included the concept of delegation, by making a distinction between “holder” and “subject” of a VC: a parent managing a child's health records. A carer accessing benefits on behalf of someone they support. A legal proxy acting for a person who lacks capacity. A caseworker facilitating access for someone without a digital footprint. These scenarios are routine features of how people interact with public services, and they are relationships that existing legal frameworks already recognise.

Digital systems have almost never been designed for them properly. The result is informal workarounds: people share credentials directly, hand over devices, or ask someone else to complete transactions on their behalf in ways that leave no trace and offer no protection.

Verifiable credentials make it technically possible to build delegation that is explicit, scoped, revocable and auditable. A carer could be granted access to a specific credential, for a specific purpose, for a defined period. Every use could be logged. The scope of what can and cannot be done on the holder's behalf could be defined in advance and changed at any time. All of it requires deliberate design decisions to be made now, before the infrastructure is built with only happy paths of delegation in mind.

Agents and delegation: the AI blindspot about to go mainstream

The W3C specification defines the holder as the entity that owns and manages credentials. At the time it was issued people like me made the assumption that the holder is human. In the age of agentic AI, that assumption needs revisiting.

An agent acting on a user's behalf does not simply present a credential when asked. It makes judgments about when to share data, with which services, under what conditions, and to what end. It acts in ways the user may not be aware of. The consent that governs this activity may have been given once, in a configuration screen completed at setup, under conditions that few users will have fully understood.

This is a categorically different trust problem from the ones discussed so far. A caseworker acting as a delegate operates within a legal framework, with defined responsibilities and accountability. An AI agent operating with access to a user's wallet will operate within whatever constraints were set at configuration.

This is not an issue that can be addressed by designing clear interface patterns for wallets. This calls for an entire ecosystem (agents, wallets, issuers, verifiers, registries) to be architected in a way that keeps the user's interests structurally protected, even when the user is not present in the interaction. The protections need to be built into the standards and governance frameworks that define how agents are permitted to operate, what they are permitted to request, and what recourse users have when things go wrong.

The history of the web offers a warning. The architectural choices made in the early years of online advertising created an infrastructure that took decades to begin regulating and has not yet been meaningfully dismantled. The decisions being made now about how agents interact with identity infrastructure deserve the same scrutiny. We have the benefit of being able to see the pattern, and I believe the commercial and reputational consequences of getting this wrong will force a market correction, probably a regulatory one. However there's still plenty to be concerned about regarding the unintended consequences this shift will cause if addressed only reactively.

The sirens of automation

The full logic of the automation spectrum introduced in the first article ends, predictably, with fully automated decisions: a system that knows who you are, what you have, and what you qualify for, and acts accordingly without requiring you to do anything. This is technically achievable. It is also, at the top end of the spectrum, where the most caution is required.

During the GOV.UK prototype work we made the deliberate decision to not automate the activation of newly unlocked benefits, even when assuming the capability existed. After a successful application, the prototype surfaced a summary of what the user had become eligible for – correlated from their existing credentials and the outcome of the current journey – and presented it as a list of options for the user to act on, rather than a set of decisions made by the system.

We designed this pattern with a concrete example from Estonia in mind, where registering the birth of a child automatically enrolled parents in child benefit. This always gets mentioned as an exemplar of effective “once only” legislation, one every government should follow. It’s a well-intentioned design pattern, but it doesn’t take into account the fact that some people need to calculate the cumulative effects of benefits on taxes, on other entitlements and their broader circumstances. Automation that removes that calculation removes a decision that belongs to the person it affects.

The design question is not whether automation is technically possible at any given point in a journey. It is whether the human has been given enough information, and enough control, to make the decision themselves by understanding its consequences.

This aspect of automation becomes particularly dangerous in the context of agentic interactions. An agent that activates entitlements, submits applications, or makes eligibility decisions on a user's behalf is making choices that belong to the person whose credentials it holds – even with the best of intentions, and with the most accurate data. Stripping agency from this layer might become a legal, if not philosophical, overreach.

The “experience gap” is a design responsibility

Verifiable credentials will not rebuild trust automatically. They are infrastructure, and infrastructure reflects the intentions of the people who design it.

The first article covered what they make possible. This one has covered what is not yet being designed for: inclusion, delegation, legibility, and the limits of automation. None of these are inherently technical problems.

The window to make these decisions well is open now. The standards are being written. The first implementations are being built. The patterns that get established in the next few years will be the defaults that everyone else inherits. That is how the current web was built, not through malice (mostly) but through a series of defaults that were never seriously questioned until the consequences became impossible to ignore.

We have the benefit of being able to see that pattern clearly. The question is whether we will act on it.

A note on the work

The 2019 GOV.UK personalisation prototype discussed throughout these articles was the work of a small team who spent time and energy exploring a design territory that had no map. I am very grateful for what I learned working alongside them, in particular Steve Messer, Conor Delahunty, Will Harmer, Lisa Koeman and Erin Raj-Staniland. I also have to thank the innumerable conversations I had on the subject since, both in GDS, in the early days of One Login, and outside of it, when it became clear that while I had left the job, the job had not left me.

One last caveat: the prototype presented here is one of the many designed back then (Steve’s article lists some other patterns we looked at). It was designed to explain concepts and enable conversations, and this is why I feel it’s still useful today, but doesn’t represent any official policy or planned work, and it’s full of holes and errors – on purpose.